|

| DOS Web Browsers: Arachne (left) and Dillo |

In all the time I have been playing with computers going back to when Winston Smith took on Oceania, I have never had an opportunity to try out networking and get on the internet from just DOS on a PC. So I fired up Oracle VirtualBox and went to consult with The Great Search Engine.

It turns out a lot of folks have not only done this, but took pains to document what they did; with a bit of trial and error, I now have a functional PC DOS VirtualBox appliance equipped with CD ROM and SoundBlaster 16 drivers, a Packet Driver for AMD PCnet-Fast III network adapter, the mTCP Suite of TCP/IP stack with client and server networking applications, and two DOS-based graphical web browsers - Dillo and Arachne.

The DOS VirtualBox appliance specs I used: 64 MB RAM, PIIX 3 chipset, PS/2 mouse, no I/O APIC, no EFI, 1 CPU, no PAE/NX, 32 MB video RAM, a Floppy controller, an IDE controller with a 512 MB fixed-sized (not dynamic) hard drive and a CD-ROM drive, SoundBlaster 16 audio card, bridged PCnet-FAST III network adapter, two serial ports and a USB 1.1 OHCI controller.

I needed a way to make downloaded stuff available to the vbox appliance via floppy disk and CD-ROM images. I used Magic ISO Maker (

Setup_MagicISO.exe) to create floppy and CD images to attach to the appliance and transfer downloaded files. The unregistered version of Magic ISO Maker cannot create images over 300 MB, but this was not a problem for my use cases of creating 3.5" 1.44 MB Floppy disk images and CD ROM ISOs well under the 300 MB limit. This way I could also have an

archive of the floppy disks and CD ROMs used to build this VM. I avoided VirtualBox's shared folder feature.

|

| Magic ISO Maker for 1.44 MB Floppy Disk and CD ROM ISO image creation |

IBM PC DOS 2000

|

| IBM PC DOS 2000 (PC DOS 7.0 Revision 1) Setup |

I remembered switching away from Microsoft MS DOS 6.22 to IBM PC DOS 7 based on rumors of the latter being better, faster, more stable with better memory management etc. and generally a good thing to do as it was maintained by the Big Blue. While looking for it, I found out there was actually a later Revision 1 to PC DOS 7 that added Y2K compatibility, marketed as "PC DOS 2000". I grabbed the

six PC DOS 2000 3.5" 1.44 MB floppy disk images and installed everything, including IBM Antivirus and Central Point Backup.

Using PC DOS 7's "E" editor, I edited CONFIG.SYS to increase the amount of memory available for DOS environment variables, enable HIMEM.SYS and EMM386.EXE to make as much of conventional memory free as possible by moving drivers and boot-time programs to upper and extended XMS memory.

CONFIG.SYS:

SHELL=C:\DOS\COMMAND.COM /E:1024 /P

DEVICE=C:\DOS\HIMEM.SYS

DOS=HIGH,UMB

DEVICEHIGH=C:\DOS\EMM386.EXE NOEMS

CD ROM SUPPORT

Following the typical process of adding CD ROM support to PC DOS, I picked up

IBMIDECD.SYS and MSCDEX.EXE, and transferred them over to C:\CDROM\ directory on the vbox VM via a floppy disk created using Magic ISO Maker. I then added the following usual CD ROM related lines to CONFIG.SYS and AUTOEXEC,BAT:

CONFIG.SYS:

DEVICEHIGH=C:\CDROM\IBMIDECD.SYS /D:IBMCD001

AUTOEXEC.BAT:

LH C:\CDROM\MSCDEX /D:IBMCD001 /M:10

After reboot, the CD ROM drive appeared as drive D:.

SOUNDBLASTER SB 16 SUPPORT

|

| Creative Labs SoundBlaster SB 16 DOS Driver Installation |

Creative Labs still provide a download link to the "

sbbasic.exe" self-extracting driver installer for SoundBlaster 16 audio cards for DOS. I put it on a floppy image using Magic ISO Maker, connected that floppy image to the DOS VM to copy sbbasic.exe to a temporary directory and executed it from the DOS prompt. This extracted a INSTALL.EXE along with a bunch of *.PVL and sundry files. I then executed the INSTALL.EXE program to install the SB16 driver and utilities on DOS. The installer also took care of updating CONFIG.SYS and AUTOEXEC.BAT. I later updated CONFIG.SYS to change "DEVICE=" to "DEVICEHIGH=" in the line loading the SB 16 driver.

CONFIG.SYS:

DEVICEHIGH=C:\SB16\DRV\CSP.SYS /UNIT=0 /BLASTER=A:220

AUTOEXEC.BAT:

SET SOUND=C:\SB16

SET BLASTER=A220 I5 D1 H5 P330 T6

SET MIDI=SYNTH:1 MAP:E

C:\SB16\DIAGNOSE /S

C:\SB16\MIXERSET /P /Q

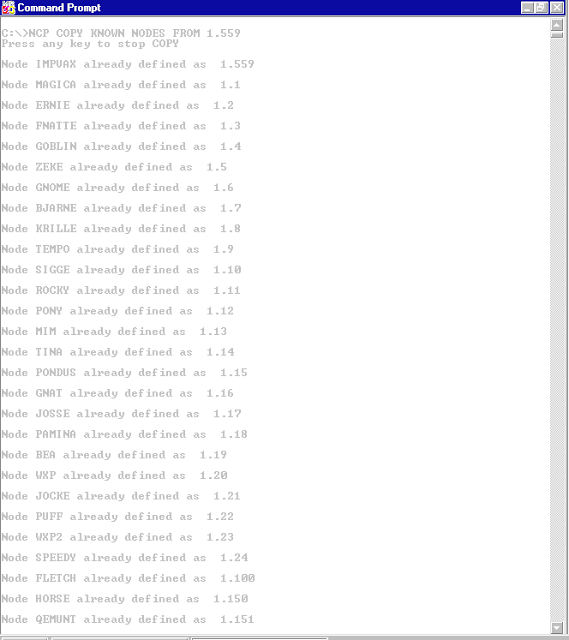

AMD AM79C973 PCnet-FAST III Network Adapter Packet Driver for DOS

|

| AMD AM79C973 PCnet-FAST III Network Adapter Packet Driver for DOS initialization at boot |

AMD's "

PCnet Software Version 3.2 October 1996" NIC driver floppy disk includes the DOS packet driver for the PCnet-Fast III (AM79C973) network adapter.

I created a C:\PCNTDRV directory and copied everything on the diskette into it (using XCOPY with /E switch to preserve the directory structure). I then added the following to AUTOEXEC.BAT to load the DOS packet driver at boot time:

LH C:\PCNTDRV\PKTDRVR\PCNTPK INT=0X60

On rebooting, the packet driver located the PCnet-Fast III NIC correctly, and reported the MAC address which matched up with that configured in the VirtualBox settings for the VM. There is also a nifty set of tools in the C:\PCNTDRV\PKTDRVR directory that can be used to check and monitor network traffic, for example C:\PCNTDRV\PKTDRVR\PKTSTAT.

mTCP Suite

|

| mTCP suite TCP/IP stack and applications for DOS: ping and pkttool scan |

Now that the packet driver for the network adapter was working, it was time to try some basic TCP/IP utilities. Michael B. Brutman's amazing

mTCP Suite comes with all standard TCP/IP client applications as well as a web server and FTP server. The current version of the entire suite is around 964 KB in size. I created a floppy image using Magic ISO Maker containing everything extracted from the downloaded mTCP zip file and copied over the mTCP Suite into the DOS appliance under the directory C:\MTCP.

Following the documentation, I gave it a static IP address (you can use DHCP if you wish; read the PDF manual) by configuring this into a C:\MTCP\MYCONFIG.TXT file:

PACKETINT 0X60

IPADDR 10.100.0.20

NETMASK 255.255.255.0

GATEWAY 10.100.0.1

NAMESERVER 10.100.0.1

Then I set the MTCPCFG environment variable in AUTOEXEC.BAT to point to the configuration file:

SET MTCPCFG=C:\MTCP\MYCONFIG.TXT

and rebooted. That was all that was needed to get mTCP's TCP/IP stack and tools to work.

mTCP includes an SNTP client, great news for me being a NTP time sync nut running multiple

public time-servers contributing to the NTP Pool Project. I added the following at the bottom of AUTOEXEC.BAT to sync the system clock with pool.ntp.org at boot:

SET TZ=UTC

C:\MTCP\SNTP -SET POOL.NTP.ORG

ARACHNE WEB BROWSER FOR DOS

|

| Arachne: DOS Web Browser |

I downloaded the

Arachne 1.97; GPL zipped self-extracting EXE and extracted it to a temporary folder on my Windows 10 host laptop. This resulted in a 1,272 KB A197GPL.EXE which Windows 10 refused to execute. Using Magic ISO Maker, I created a 1.44 MB floppy disk image containing A197GPL.EXE and transferred it to the DOS vbox into a temporary directory and executed it from DOS. Surprise! A197GPL.EXE is not just a self-extracting archive, it is an installer that installed Arachne to C:\ARACHNE (the default) while showing a nifty white-on-blue progress bar.

The installer left me inside the C:\ARACHNE directory with instructions to execute ARACHNE.BAT to configure it. Doing so presented a graphical setup procedure with a series of screens to define the screen resolution and internet connection. I selected the "Packet Wizard" since I already had a Packet Driver up and running for the network adapter. It auto-detected the Packet Driver successfully and then presented the usual DHCP vs. Static Address options for TCP/IP configuration. I configured a static IP address (similar to the mTCP setup).

The setup procedure then asked for email configuration (Arachne is also a email client) which I skipped over retaining the non-working defaults for now. It then presented a screen full of sundry options (long filename support, timezone, character set etc.) before writing out the configuration file to C:\ARACHNE\ARACHNE.CFG and presenting a Arachne Options screen. I indicated that I was happy with the displayed options, and was finally presented a web-browser screen, with a URL address bar.

Sadly, Arachne does not support SSL-secured HTTPS, and seems to go into a loop forwarding HTTPS port to HTTP back to HTTPS when I tried to browse to, for example, www.bankofamerica.com. It is not too tight a loop - I could click the "X" on the toolbox and interrupt it. Perhaps setting the configuration item "HTTPS2HTTP Yes" to "No" under the "[auto-added]" section will address this somehow, something to try later.

|

| Arachne DOS Browser Desktop |

I made some minor tweaks to C:\ARACHNE\ARACHNE.CFG (

full ARACHNE.CFG file here) for the home and search pages in the [internet] section:

HomePage http://www.google.com/

SearchPage http://www.google.com/

SearchEngine http://www.google.com/search?q=

Arachne tries to be many things. It includes a phone dialer. It is a email client. Clicking on the little icon of the desk labelled "Desktop" launches a file manager.

DILLO WEB BROWSER FOR DOS

|

| Dillo: DOS Web Browser |

I downloaded the latest 3.02b version of Dillo DOS Web Browser zip file from

archive.org. The total file size of the extracted files was over 5 MB. I used Magic ISO Maker to create a ISO CD image containing the extracted files and connected that ISO image to the CD ROM drive of vbox DOS. I then copied the files into C:\DILLODOS directory. Then I configured the network details (IP Address, Netmask, DNS name server, gateway etc.) in C:\DILLODOS\BIN\WATTCP.CFG and launched Dillo by executing DILLO.BAT from the C:\DILLODOS directory.

That was all I needed to do to fire Dillo up. The browser (or perhaps the WATTCP stack it uses) figured out the Packet Driver and launched straightaway without any further questions about how to connect.

Dillo is faster than Arachne. It also handles SSL-equipped secure web sites using HTTPS protocol.

In many ways, Dillo is far closer to a modern browser than Arachne. Given a choice, I would probably use Dillo as my primary web browser on a PC running MS DOS or PC DOS.

CONFIG.SYS and AUTOEXEC.BAT

Here are the two PC DOS startup files in full for reference.

CONFIG.SYS

SHELL=C:\DOS\COMMAND.COM /E:1024 /P

FILES=40

BUFFERS=10

DEVICE=C:\DOS\HIMEM.SYS

DOS=HIGH,UMB

DEVICEHIGH=C:\DOS\EMM386.EXE NOEMS

DEVICEHIGH=C:\SB16\DRV\CSP.SYS /UNIT=0 /BLASTER=A:220

DEVICEHIGH=C:\DOS\SETVER.EXE

DEVICEHIGH=C:\DOS\POWER.EXE ADV:MAX

DEVICEHIGH=C:\CDROM\IBMIDECD.SYS /D:IBMCD001

AUTOEXEC.BAT

@ECHO OFF

SET SOUND=C:\SB16

SET BLASTER=A220 I5 D1 H5 P330 T6

SET MIDI=SYNTH:1 MAP:E

C:\SB16\DIAGNOSE /S

C:\SB16\MIXERSET /P /Q

SET PATH=C:\DOS;C:\MTCP;C:\BIN;%PATH%

SET TEMP=C:\TEMP

SET TMP=C:\TEMP

LH C:\DOS\MOUSE.COM

LH C:\DOS\DOSKEY.COM

LH C:\CDROM\MSCDEX /D:IBMCD001 /M:10

LH C:\PCNTDRV\PKTDRVR\PCNTPK INT=0X60

SET MTCPCFG=C:\MTCP\MYCONFIG.TXT

SET TZ=UTC

C:\MTCP\SNTP -SET POOL.NTP.ORG

SET IBMAV=C:\DOS

CALL C:\DOS\IBMAVDR.BAT C:\DOS\

ECHO ---

ECHO TO CHANGE IP ADDRESS, USE THE "E" EDITOR TO EDIT:

ECHO - C:\MTCP\MYCONFIG.TXT

ECHO - C:\ARACHNE\ARACHNE.CFG

ECHO - C:\DILLODOS\BIN\WATTCP.CFG

ECHO TO RUN ARACHNE WEB BROWSER:

ECHO - CD C:\ARACHNE

ECHO - ARACHNE.BAT

ECHO TO RUN DILLO WEB BROWSER:

ECHO - CD C:\DILLODOS

ECHO - DILLO.BAT

ECHO ---

Downloads

I have archived all the Floppy Disk and CD ISO images mentioned in this post as well as a complete virtualbox appliance at

my google drive.